Perception

Perception is the process of transforming raw sensor data into structured and meaningful information that a robot can understand and use for decision-making.

While sensors provide raw inputs (images, point clouds, IMU data), perception algorithms extract semantics, such as objects, maps, and the robot’s position.

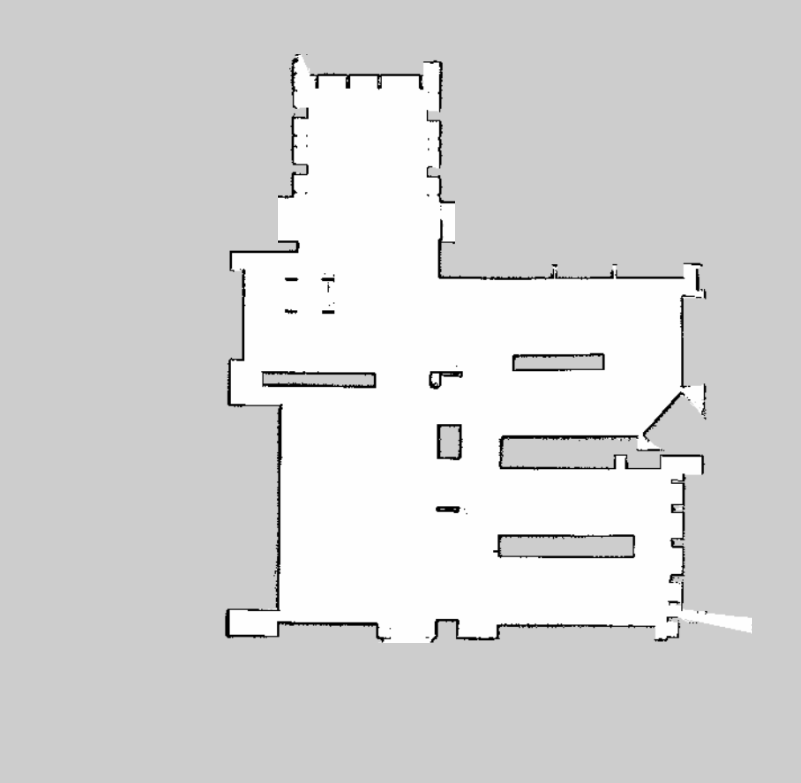

1. Mapping

-

Goal: Build a map of the environment while tracking robot motion

-

Input: Sensor data (LiDAR / Camera)

-

Output:

- 2D Occupancy Grid Map

- 3D Point Cloud Map

-

Example:

- 2D SLAM → slam_toolbox

- 3D SLAM → LiDAR-based or Visual SLAM

2. Localization

-

Goal: Estimate the robot’s position and orientation in the environment

-

Input: LiDAR / Camera / IMU

-

Output: Robot pose (x, y, z, roll, pitch, yaw)

-

Common Approaches:

- LiDAR-based localization (e.g., NDT, AMCL)

- Visual SLAM (e.g., ORB-SLAM, Isaac ROS Visual SLAM)

- Sensor fusion (e.g., EKF with IMU)

-

ROS 2 Topics Example:

/localization/pose

/tf

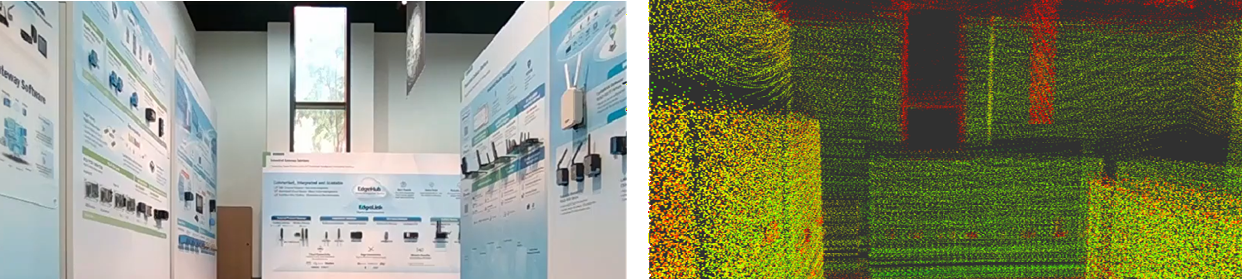

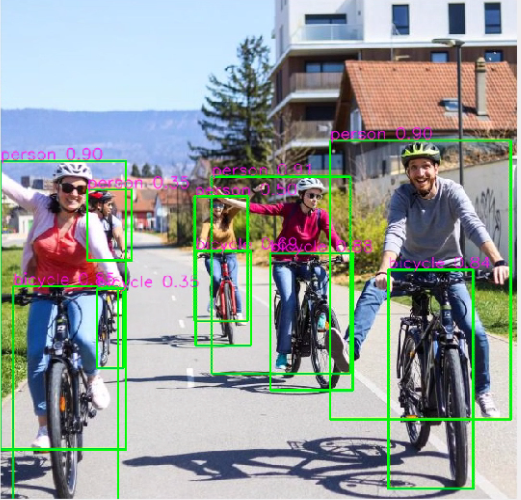

3. Object Detection

-

Goal: Identify and classify objects in the environment

-

Input: Camera images

-

Output: Bounding boxes, class labels, confidence scores

-

Example:

- YOLO (e.g., Isaac ROS YOLOv8)

- Detect people, obstacles, pallets, etc.

Next Step: The processed information is passed to the Planning module, where the robot decides how to act.